Statistical inference with mathematical models

Lecture 7

Tuesday 7th April, 2026

Making an error

Making an error

Logic of hypothesis testing (review)

- What are the chances we would collect the data we did if the null hypothesis was true?

- What are the chances we would have observed our test statistic or a more extreme one, if the null hypothesis was correct?

- This probability is called a p-value

- If the chance (p-value) is sufficiently low (\(p < \alpha\)), then we reject the null hypothesis

- If the chance (p-value) is high (\(p \ge \alpha\)), then we fail to reject the null hypothesis

Making an error

Types of error (hypothesis testing)

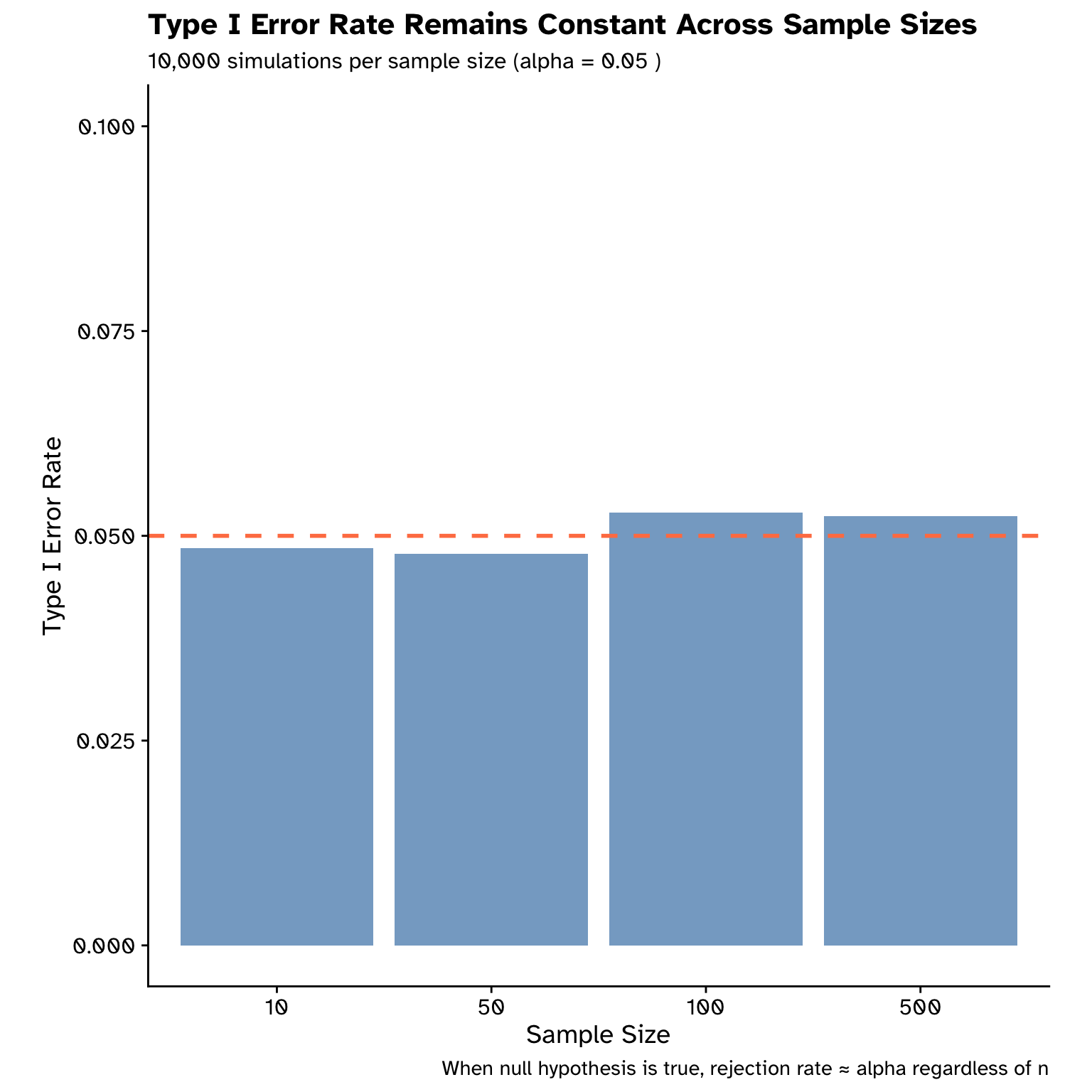

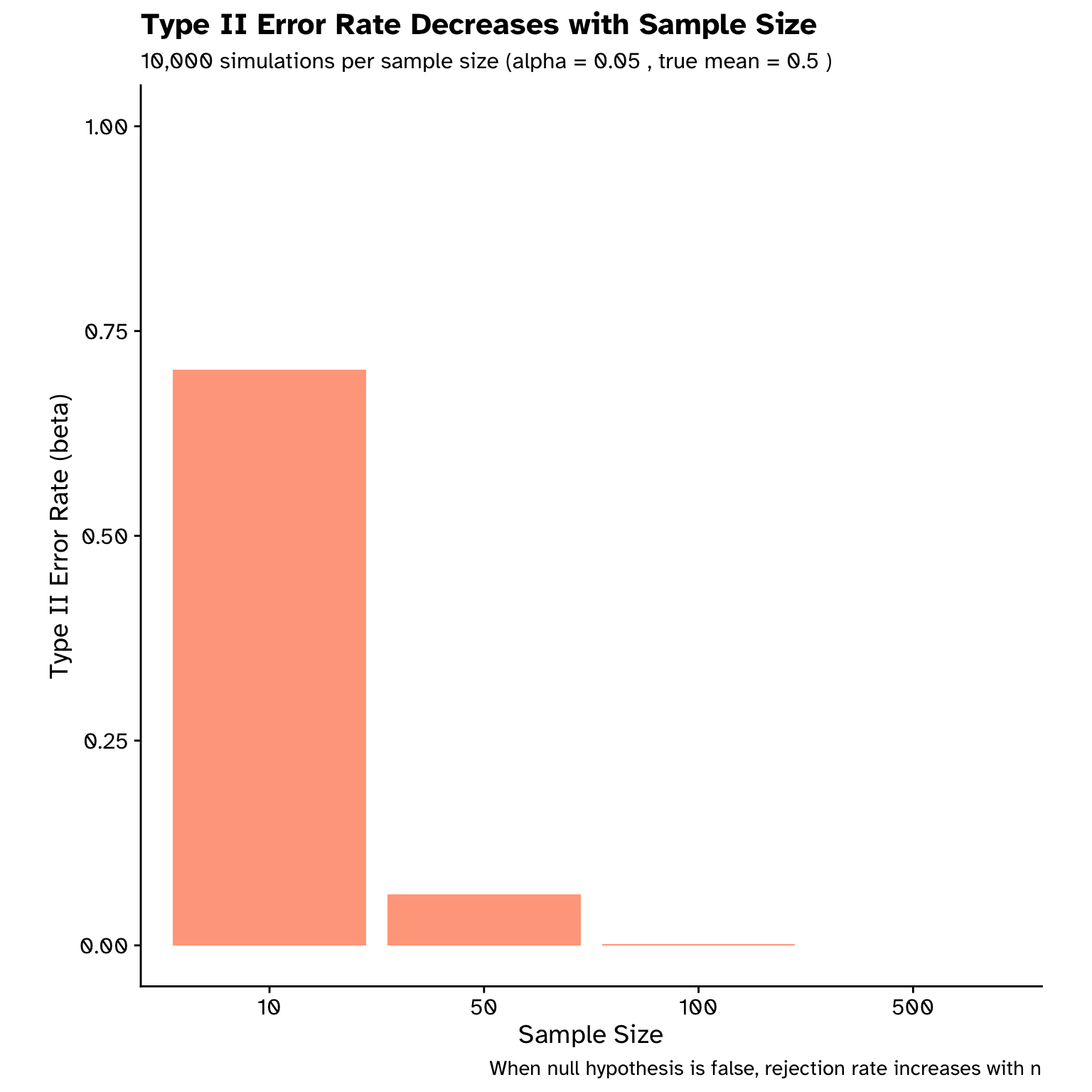

We want to find a balance between two types of errors we could make:

- \(\alpha\): type I error (false positive)

- Null hypothesis is TRUE, but we reject it

- \(\beta\): type II error (false negative)

- Null hypothesis is FALSE, but we fail to reject it

Making an error

Types of error (hypothesis testing)

- To decrease our chance of making a type I error (false positive):

- Decrease our \(\alpha\)

- p = 0.05, p = 0.01, p = 0.001?

- However, this increases our chance of making a type II error (false negative):

- To decrease our chance of making a type II error:

- Increase our sample size

- Increase \(\alpha\)

- Can also view p-values as a continuum

Making an error

Types of error (hypothesis testing)

Making an error

Types of error (hypothesis testing)

Probability models

Probability models

Flipping a coin

- I flip a fair coin over a flat surface

- Two possible outcomes:

- Heads

- Tails

- Chance of heads?

Probability models

Flipping a coin

- 50% chance heads, why?

- Ignorance?

- Uncertainty?

- Expected outcome of a lot of flips?

- Causal process, property of the universe?

Probability models

Flipping a coin

- 50% chance heads, why?

- Ignorance?

- Uncertainty?

- Expected outcome of a lot of flips?

- Causal process, property of the universe?

Probability models

Flipping a coin

\[ P(\text{heads}) = \frac{\text{number of heads}}{\text{total number of flips}} \]

\[ P(\text{success}) = \frac{\text{number of successes}}{\text{total number of trials}} \]

- \(P\ge0\)

- \(P\le1\)

Probability models

Flipping two coins

- Coin 1: heads

- Coin 2: heads

- \(P(\text{coin 1}=\text{heads} \cap \text{coin 2}=\text{heads}) = ?\)

Probability models

Flipping two coins

- Coin 1: heads

- Coin 2: heads

- \(P(\text{coin 1}=\text{heads} \cap \text{coin 2}=\text{heads}) = 0.5 \times 0.5 = 0.25\)

- Assumes both coins are independent

Probability models

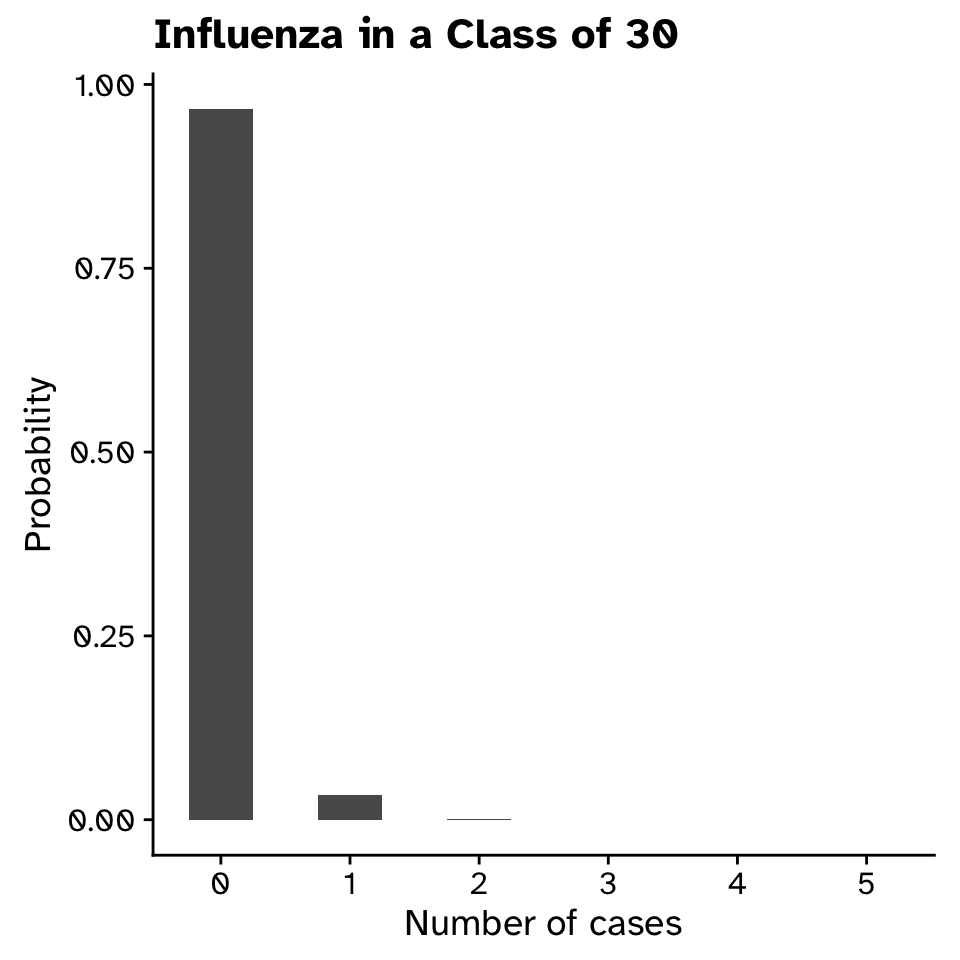

Influenza

- 2024/25 season in Sweden, 115/100,000 had influenza

- Probability in a class of 30 that someone has it?

- \(P(\text{a given person has the flu})=115/100000=0.00115\)

- \(P(\text{a given person doesn't have the flu})=1-0.00115=0.99885\)

- \(P(\text{no one has the flu}) = 0.99885^{30} = 0.9660692\)

- \(P(\text{at least one has the flu}) = 1-0.9660692=0.0339308 \approx 3.4 \%\)

Probability distributions

Probability distributions

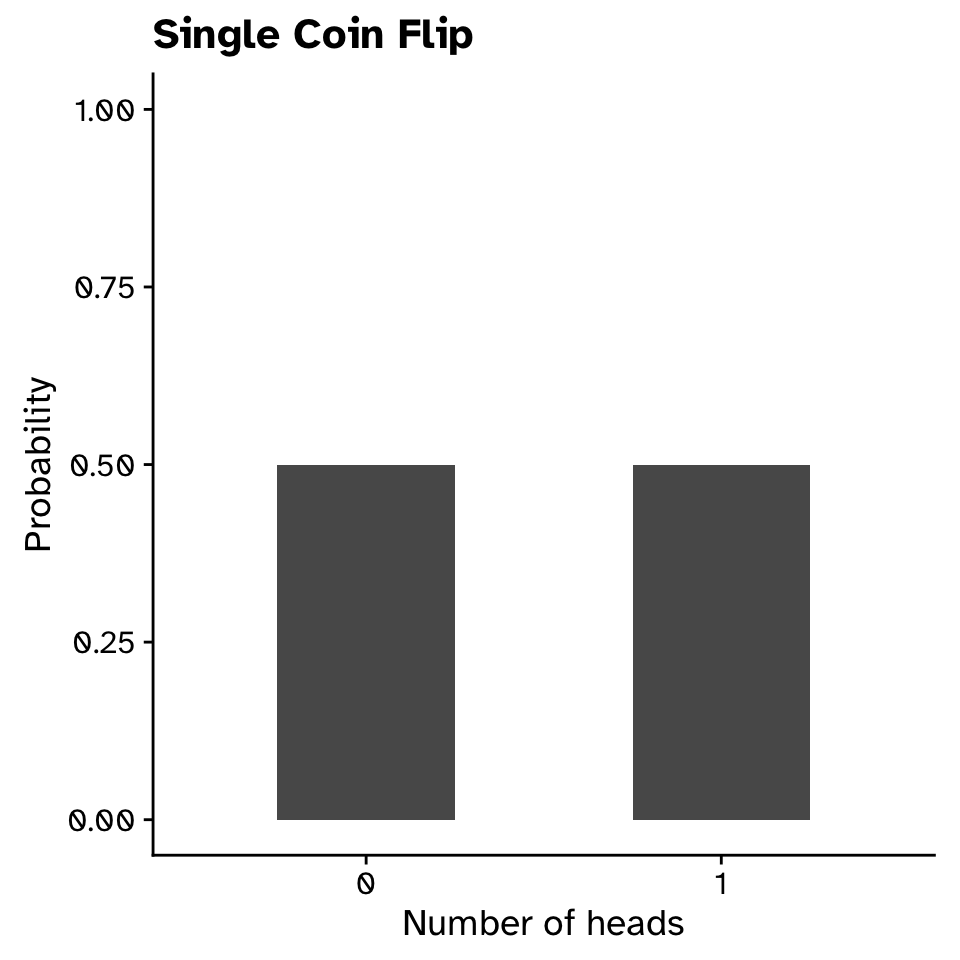

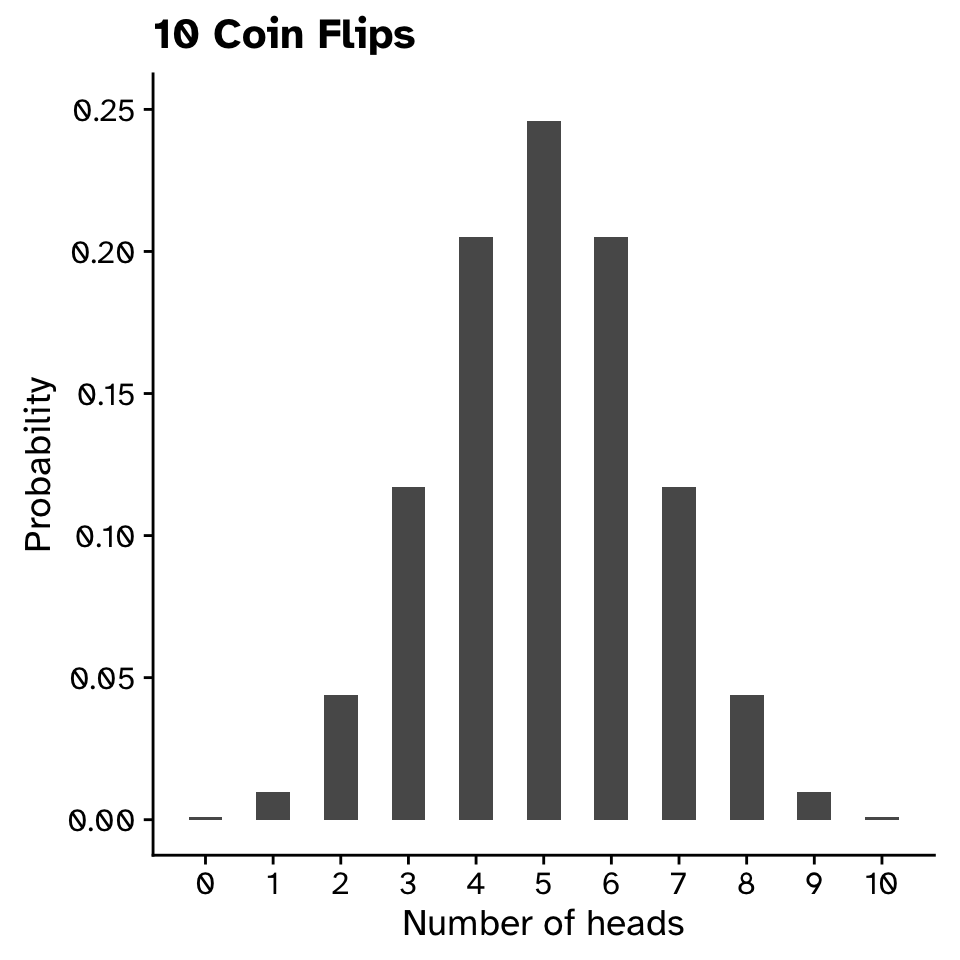

Bimodal distribution: flipping a coin

Probability distributions

Bimodal distribution: flipping a coin

- 50% chance heads, why?

- Ignorance?

- Uncertainty?

- Expected outcome of a lot of flips?

- Causal process, property of the universe?

Probability distributions

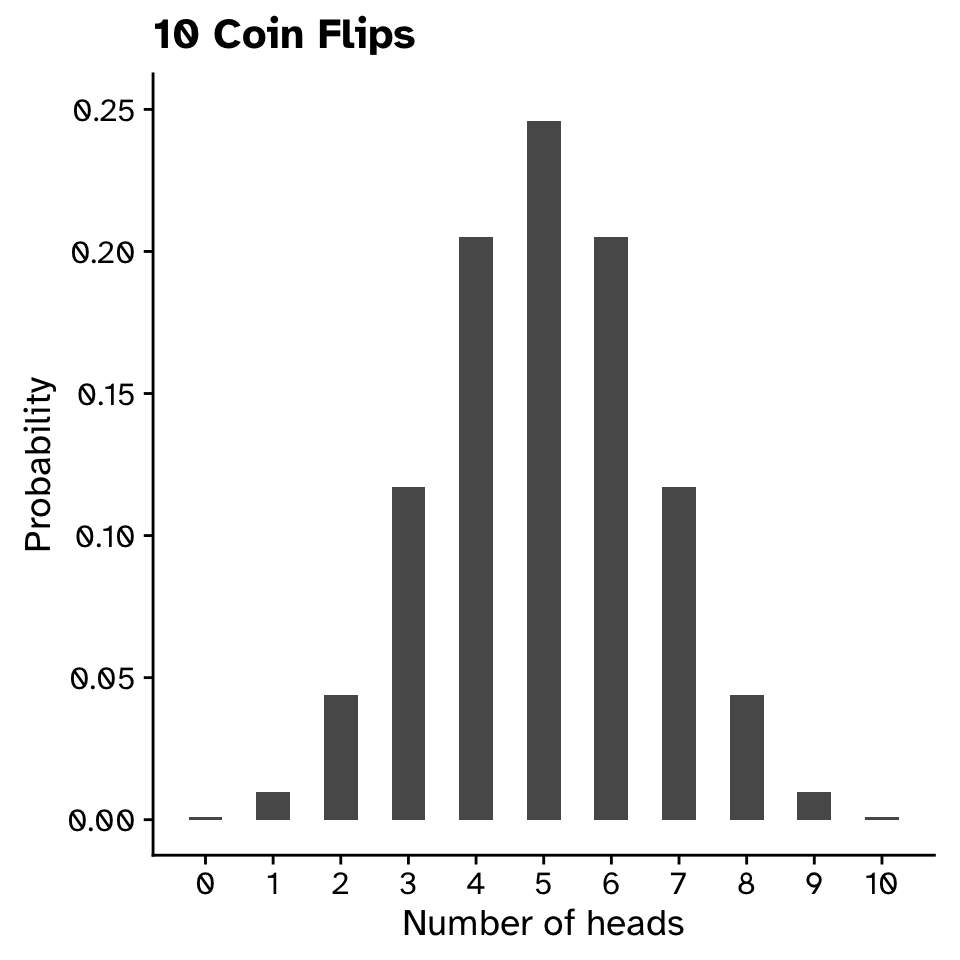

Bimodal distribution: flipping a coin

Probability distributions

Bimodal distribution: flipping a coin

- \(P(X=5)=0.25\)

- \(P(4\le X\le6)=0.25+0.2+0.2\)

- \(P(X \ge 9) \approx 0.011\)

Probability distributions

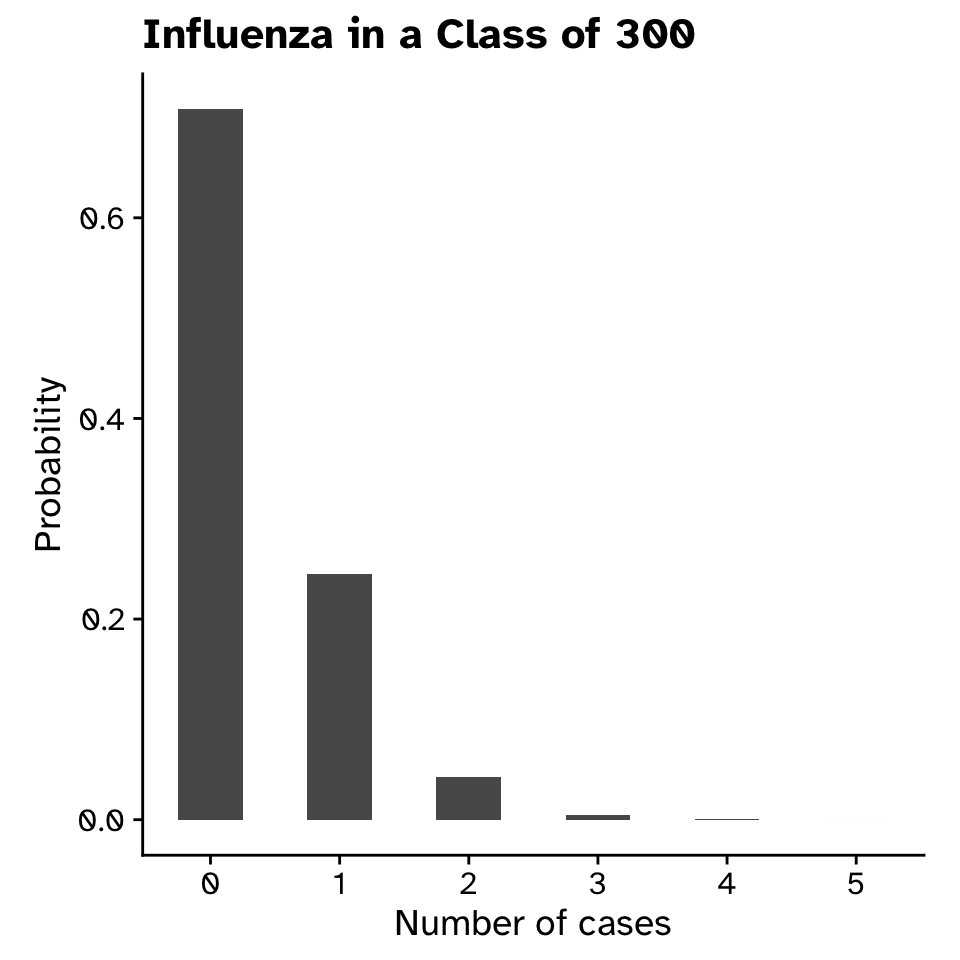

Bimodal distribution: Influenza

Probability distributions

Bimodal distribution: Influenza

Probability distributions

Bimodal distribution (no need to learn this)

\[ P(X = k) = \binom{n}{k} p^k (1-p)^{n-k} \]

where:

- \(n\) = number of trials

- \(k\) = number of successes

- \(p\) = probability of success on each trial

- \(\binom{n}{k} = \frac{n!}{k!(n-k)!}\) = binomial coefficient

Probability distributions

Bimodal distribution

- A distribution is shaped by its parameters

- Bimodal:

- \(n\) = number of trials

- \(p\) = probability of success on each trial

- Discrete observations

Probability distributions

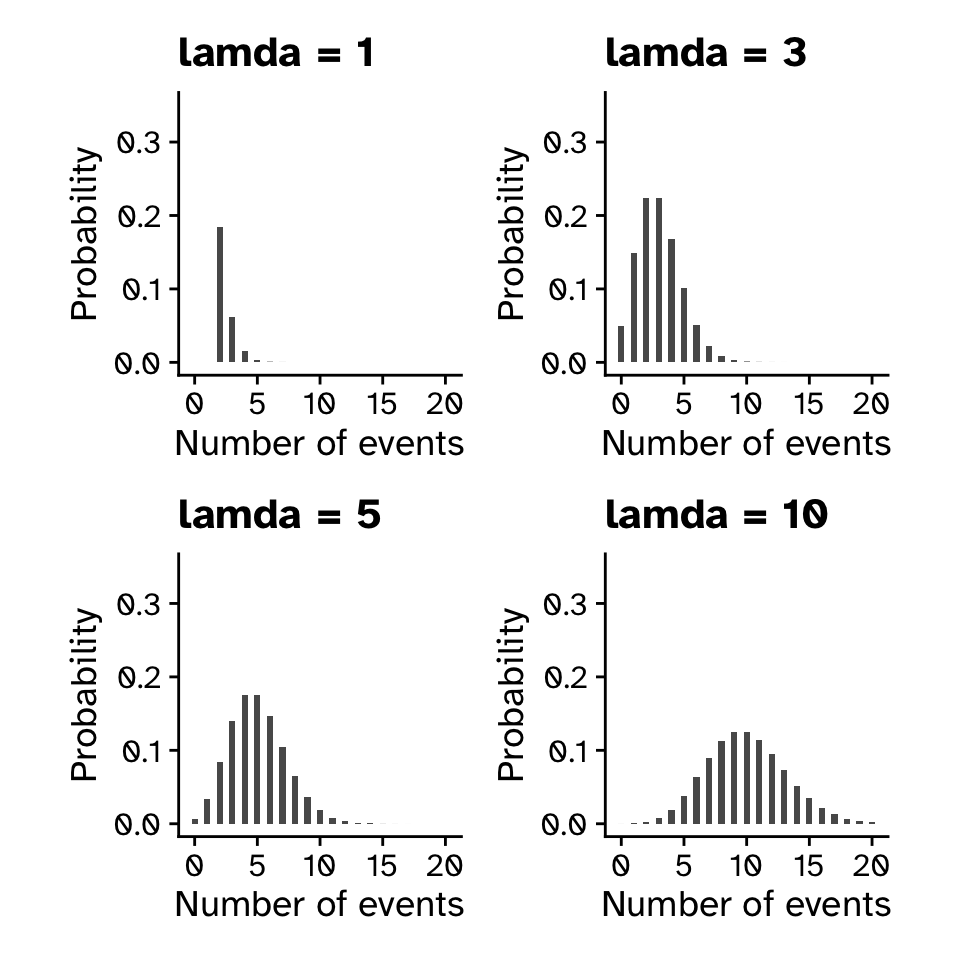

Poisson distribution

- Describes events happening over some interval (time/space)

- Number of animals recorded by a camera trap in a day

- Number of mutations in a DNA sequence

- Number of typos in a presentation

Probability distributions

Poisson distribution (no need to learn this)

\[ P(X = k) = \frac{\lambda^k e^{-\lambda}}{k!} \]

where:

- \(k\) = number of events

- \(\lambda\) = expected number of events in the interval

- \(e\) = Euler’s number (≈ 2.718)

Probability distributions

Poisson distribution

- 1 parameter:

- \(\lambda\) = expected number of events in the interval

- Both mean and variance of distribution

- Discrete observations

Probability distributions

Poisson distribution

Probability distributions

Probability mass functions (binomial & poisson)

- Discrete events

- \(P(1.5)=0\)

- \(P(between bars)=0\)

Probability distributions

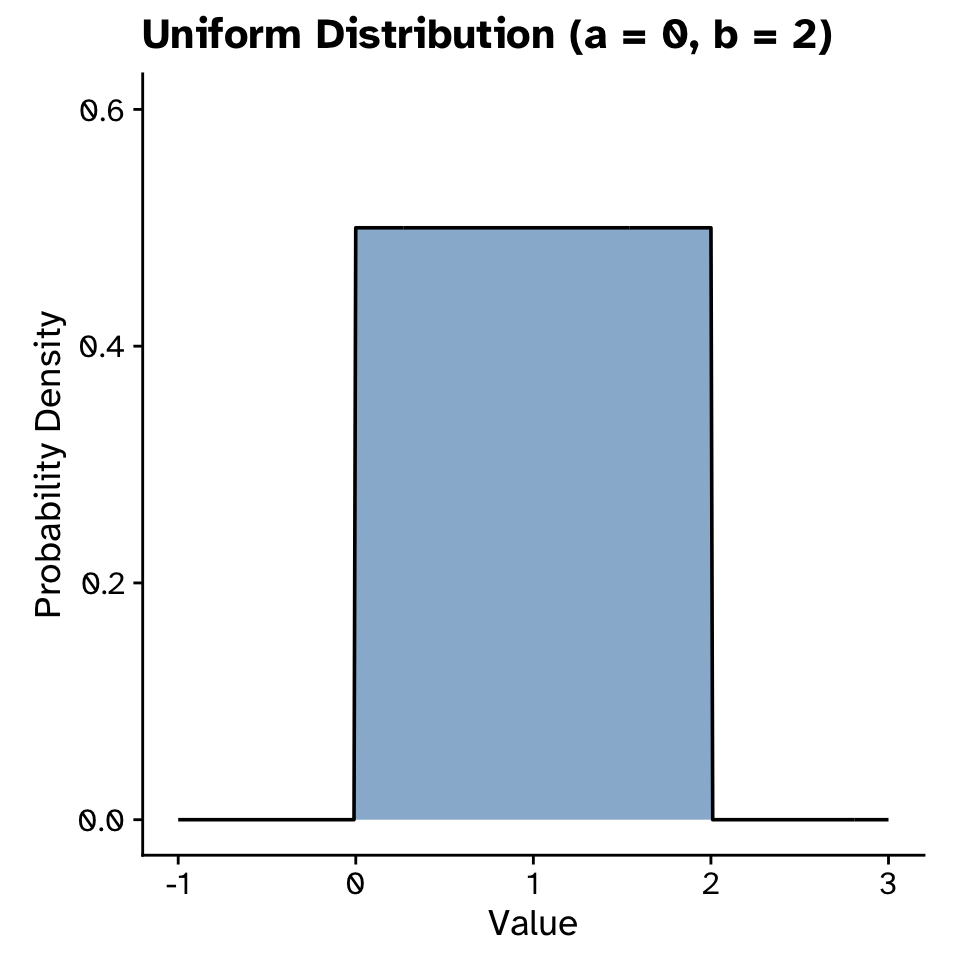

Uniform distribution

- Continuous distribution

- Two parameters: \(a\) (minimum) and \(b\) (maximum)

Probability distributions

Uniform distribution

- Not meaningful to ask probability of specific number

- Instead look at probability of intervals

Probability distributions

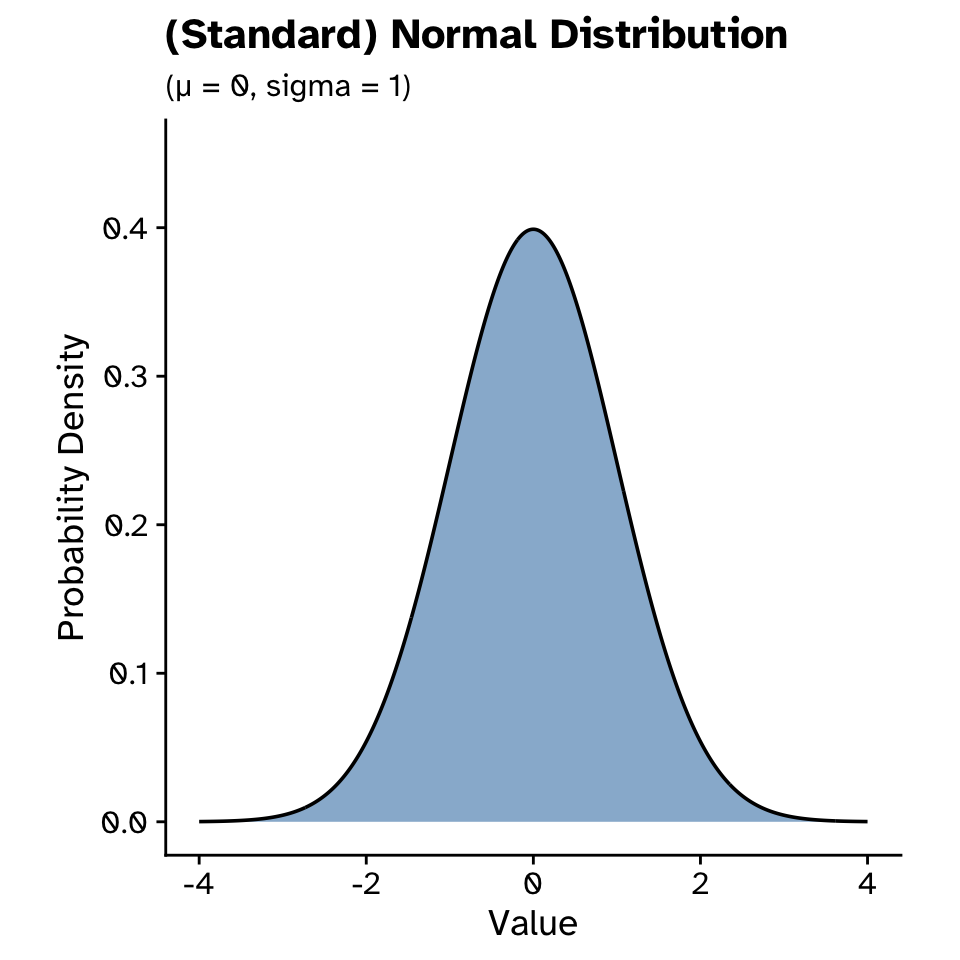

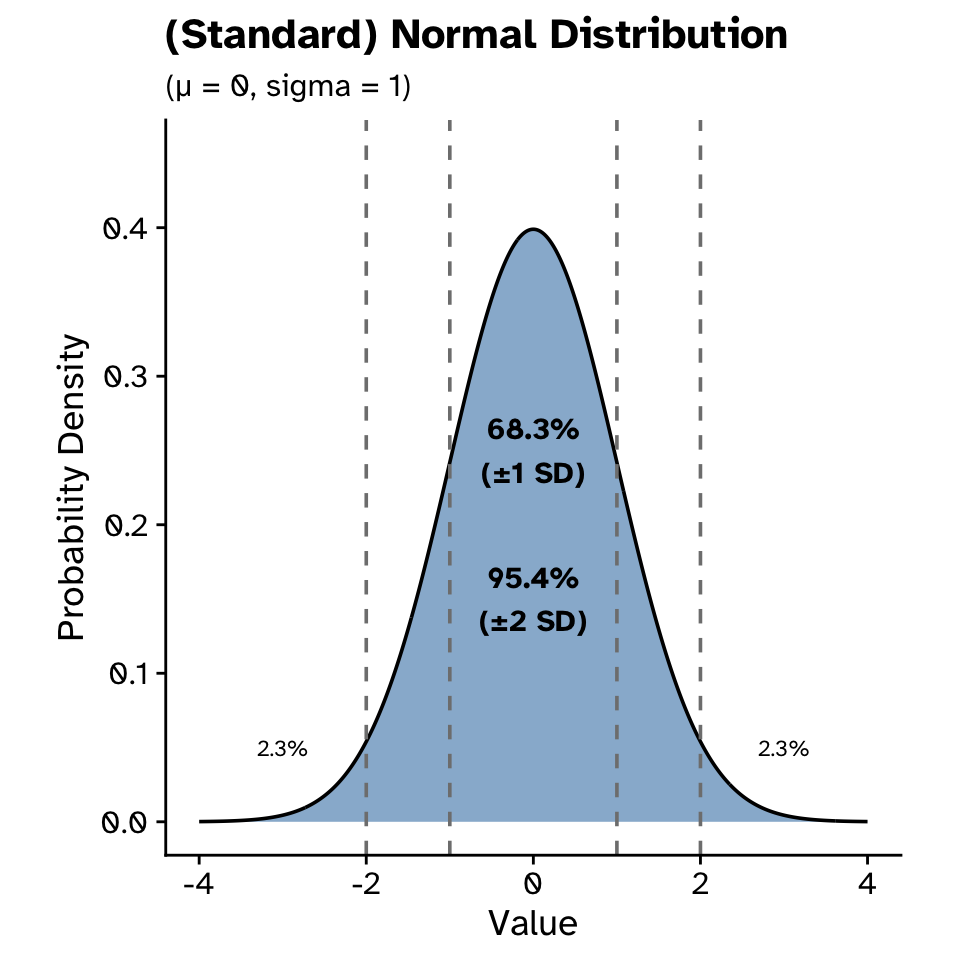

Normal distribution

Probability distributions

Normal distribution

- Continuous distribution

- Can take any real value

- Two parameters: \(\mu\) (mean) and \(\sigma\) (standard deviation)

Probability distributions

Normal distribution (no need to learn this)

\[ f(x) = \frac{1}{\sigma\sqrt{2\pi}} e^{-\frac{(x-\mu)^2}{2\sigma^2}} \]

where:

- \(\mu\) = mean

- \(\sigma\) = standard deviation

- \(e\) = Euler’s number (≈ 2.718)

- \(\pi\) = pi (≈ 3.14159)

Probability distributions

Normal distribution

Probability distributions

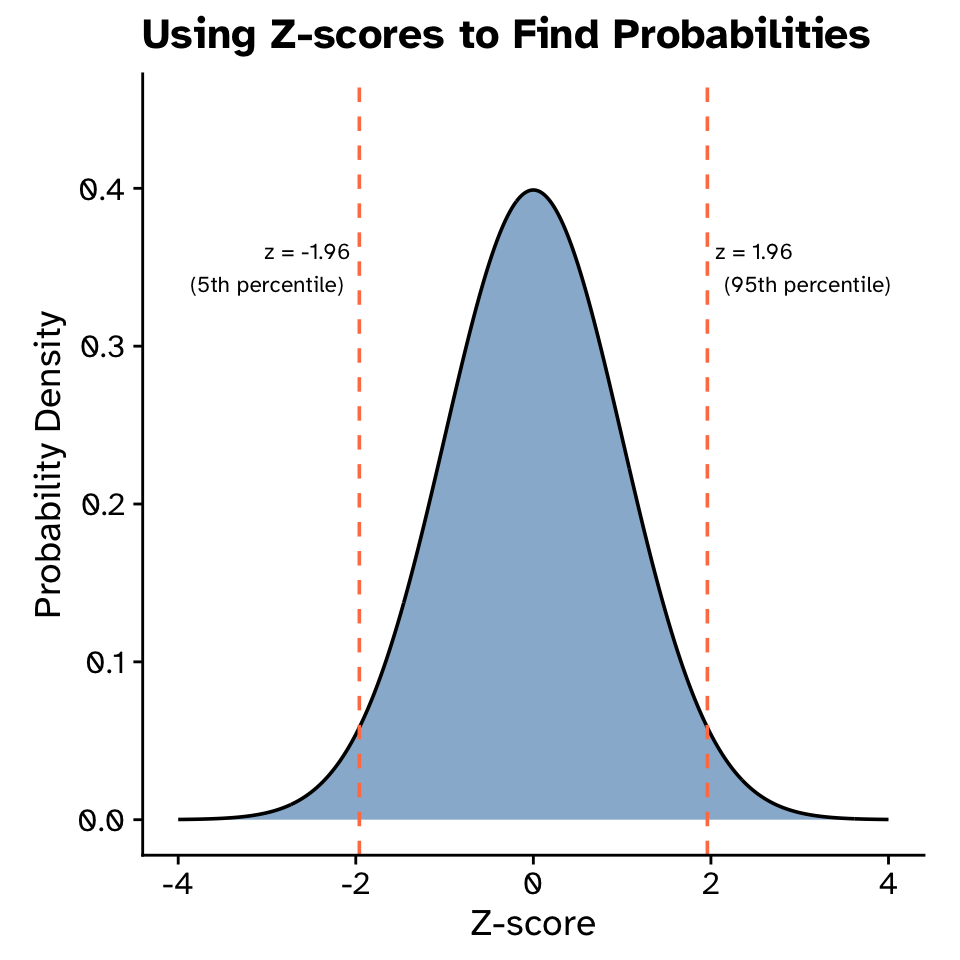

Normal distribution: Z-scores

- Standardize values from a normal distribution

- Tells us how many standard deviations away from the mean

- Formula: \(z = \frac{x - \mu}{\sigma}\)

where: - \(x\) = observed value - \(\mu\) = mean - \(\sigma\) = standard deviation

- Converts values from different scales to a common scale (mean = 0, SD = 1)

- Enables use of standard normal tables to find probabilities and percentiles

Probability distributions

Normal distribution: Z-scores

Probability distributions

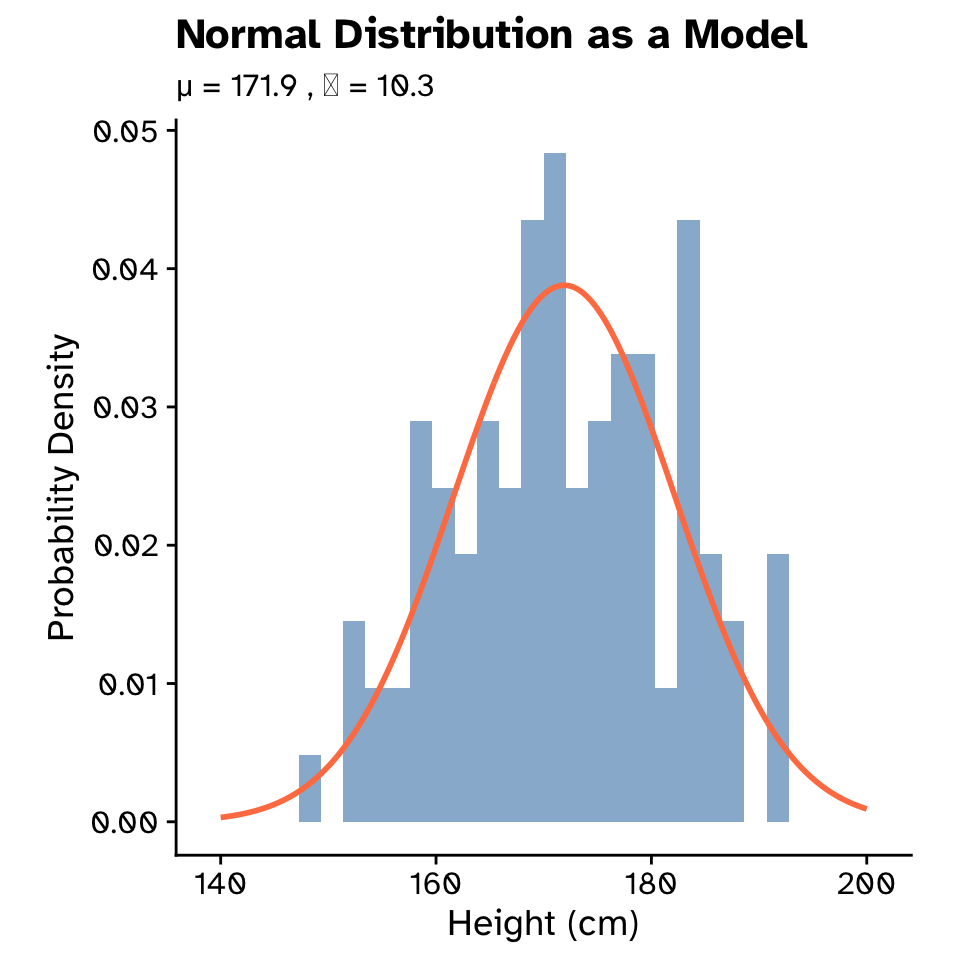

Normal distribution as a model

Probability distributions

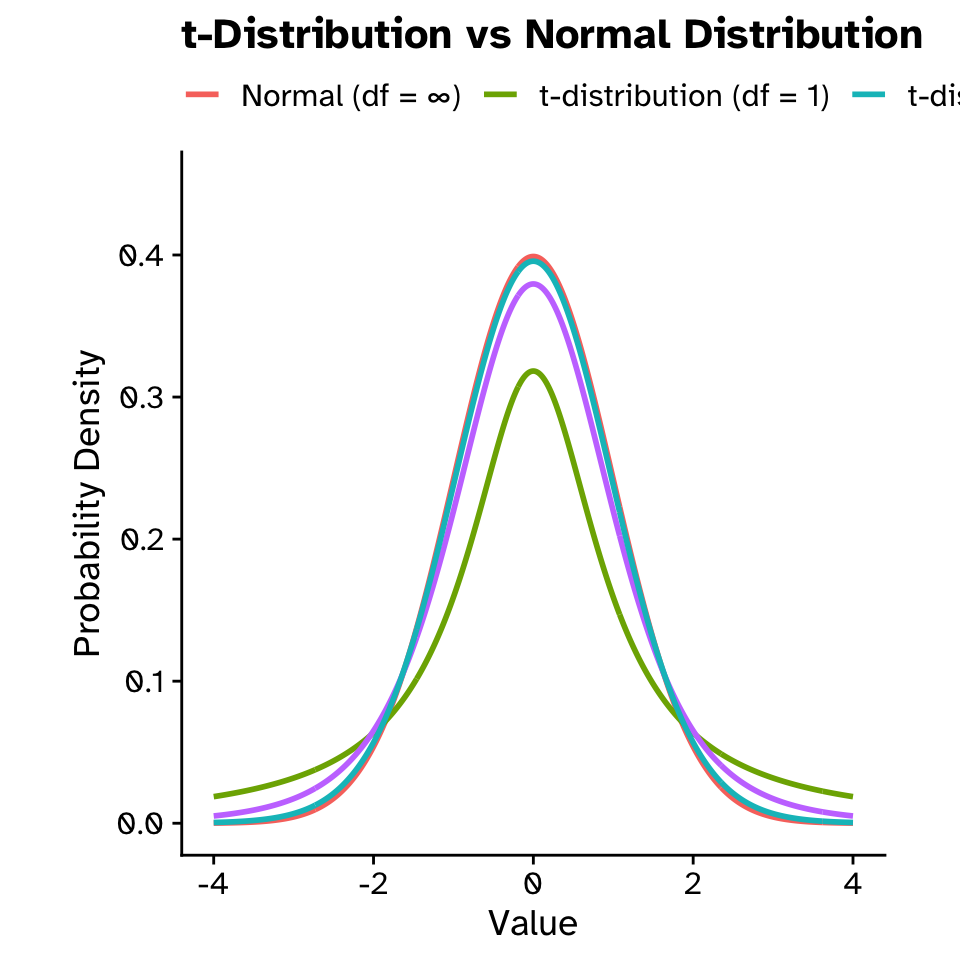

Normal distribution as a model (t-distribution)

- t distribution when estimating a population mean and the population standard deviation \(\sigma\) is unknown.

- Accounts for extra uncertainty by using the sample standard deviation \(s\) instead of \(\sigma\).

- Especially important for small sample sizes \(n\), where normal-based methods can be too optimistic.

Probability distributions

Normal distribution as a model (t-distribution)

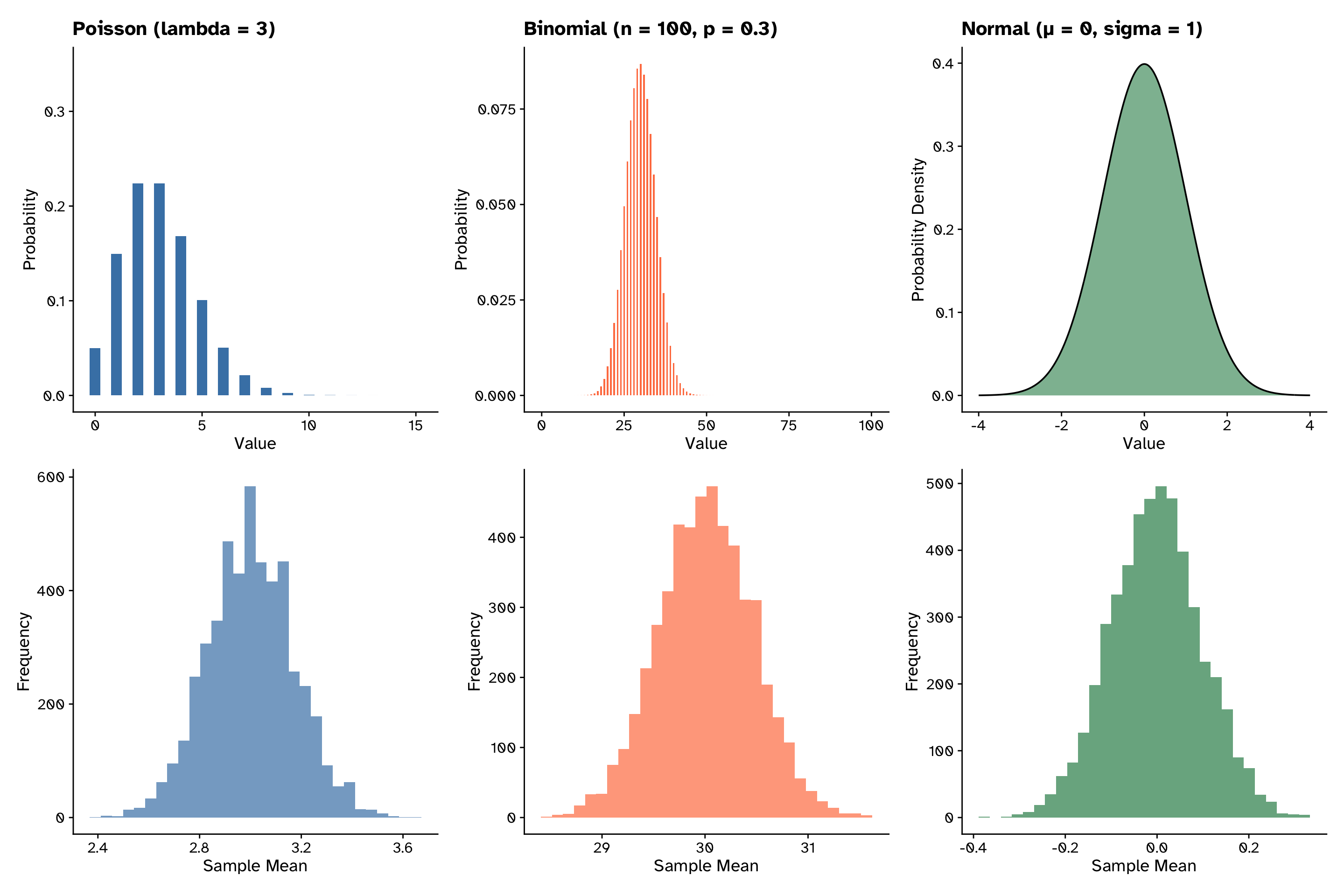

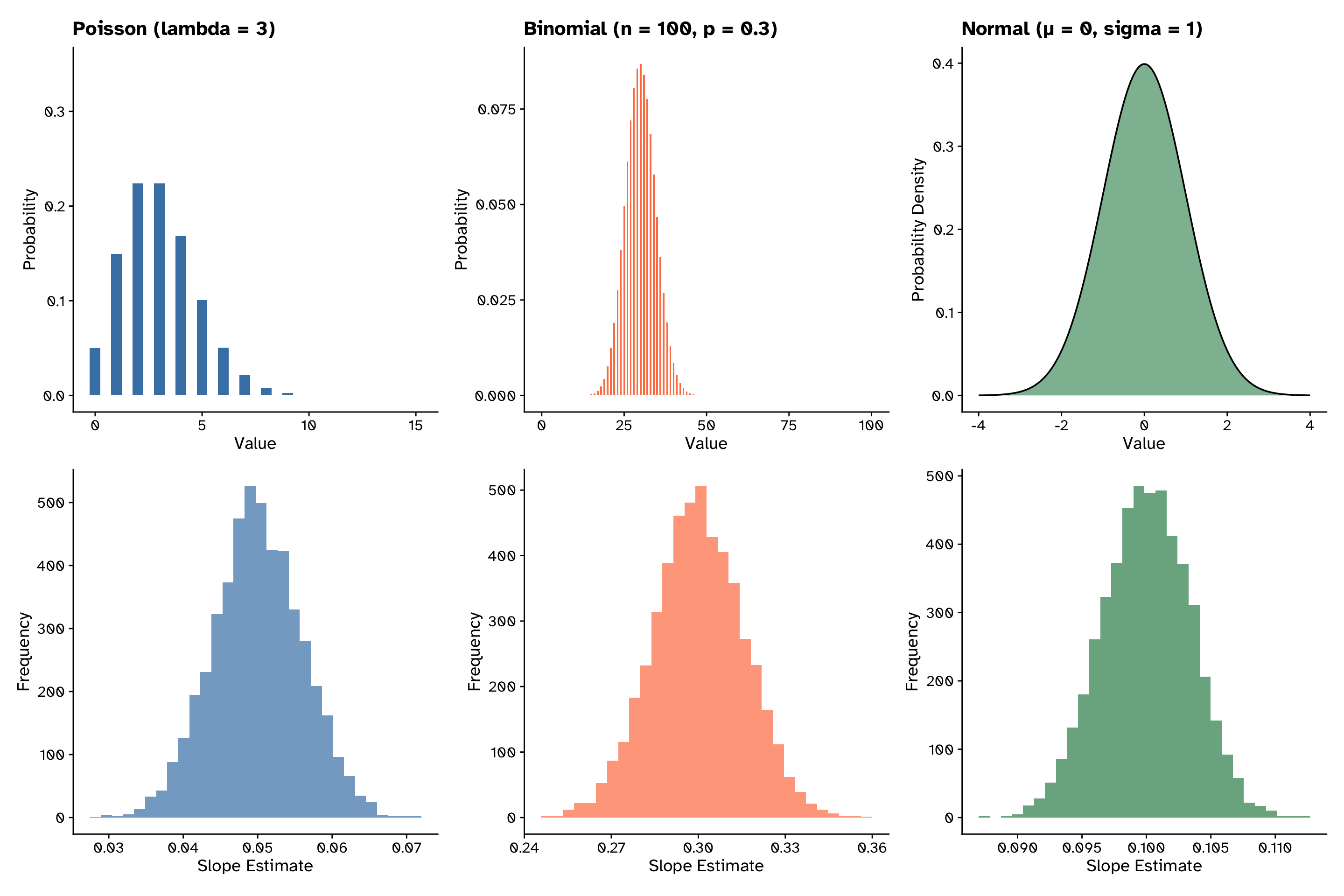

Central limit theorem

Central limit theorem

The distribution of means is normal

If we sample values from any distribution and calculate the mean, as we increase our sample size, the distribution of the mean gets closer and closer to a normal distribution (Duthie 2025)

Central limit theorem

The distribution of means is normal

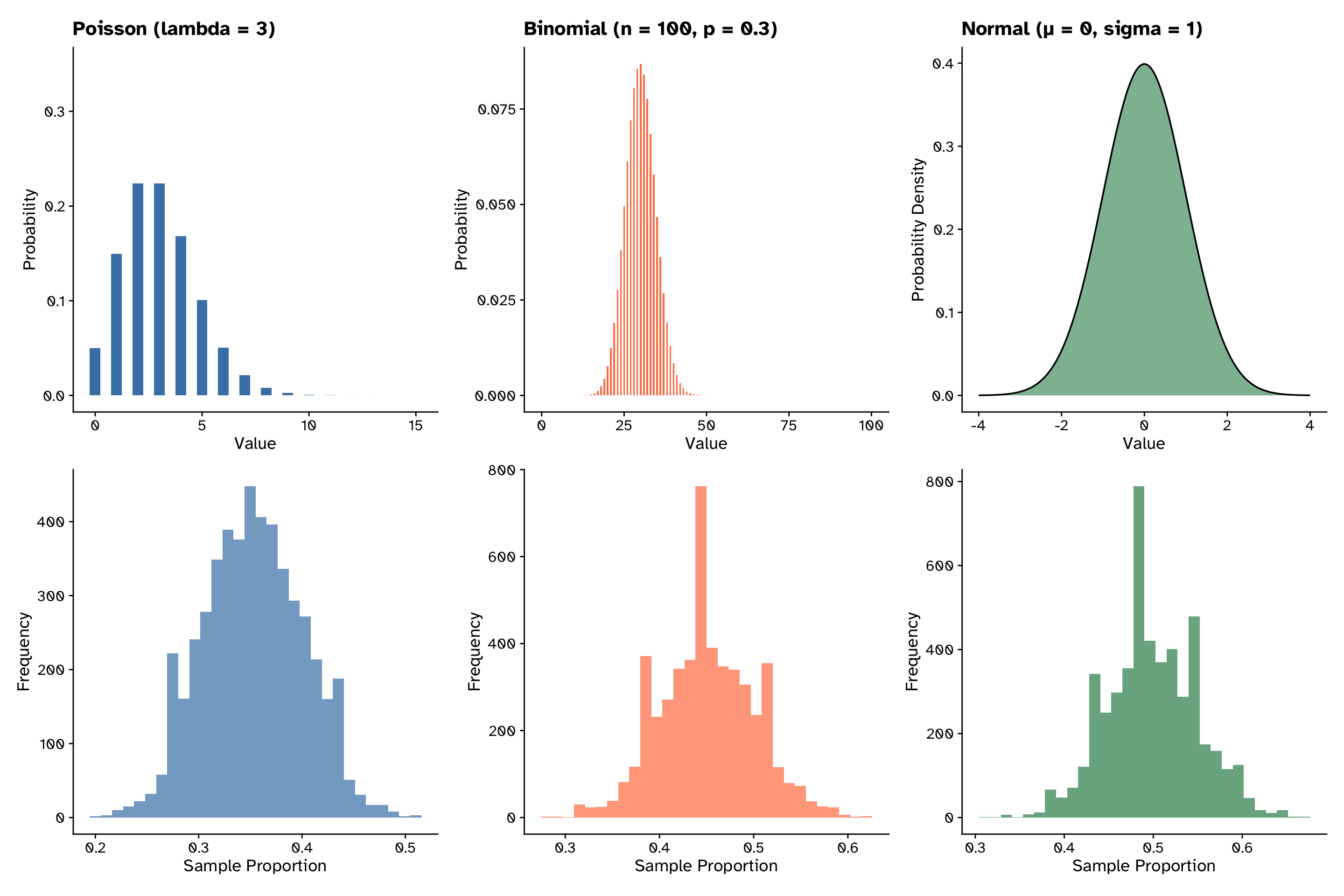

Central limit theorem

The distribution of proportions is normal

Central limit theorem

The distribution of slopes is normal

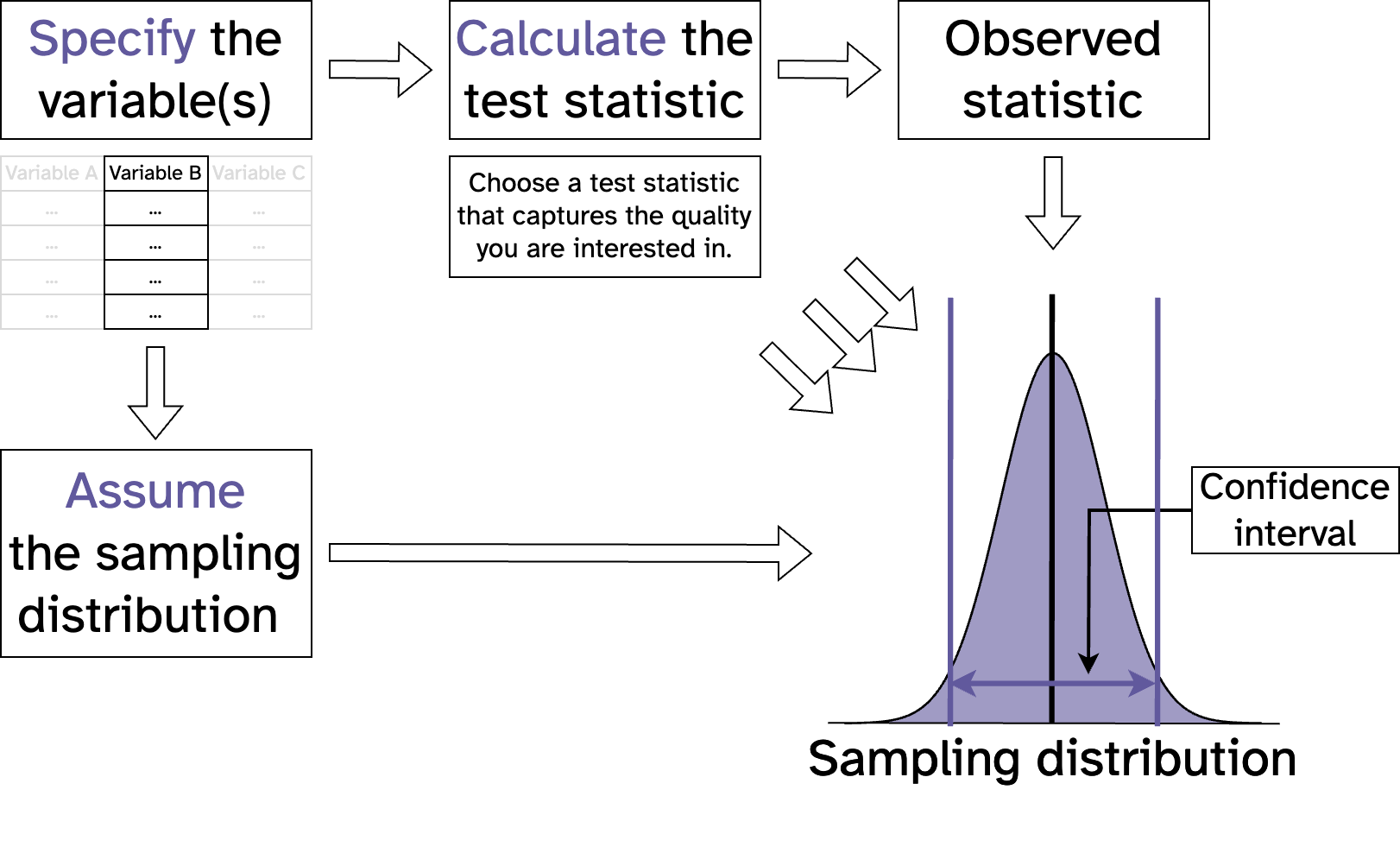

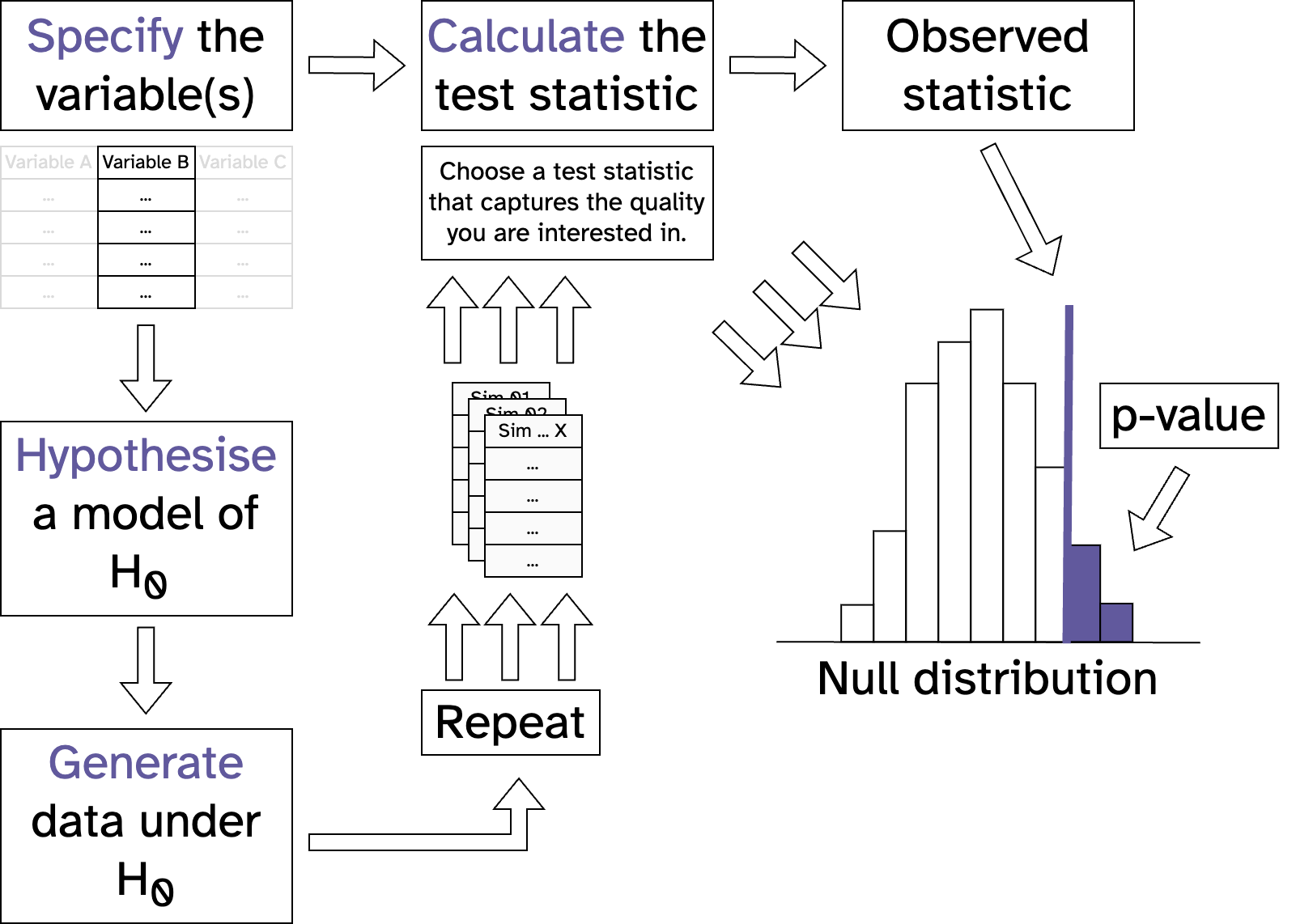

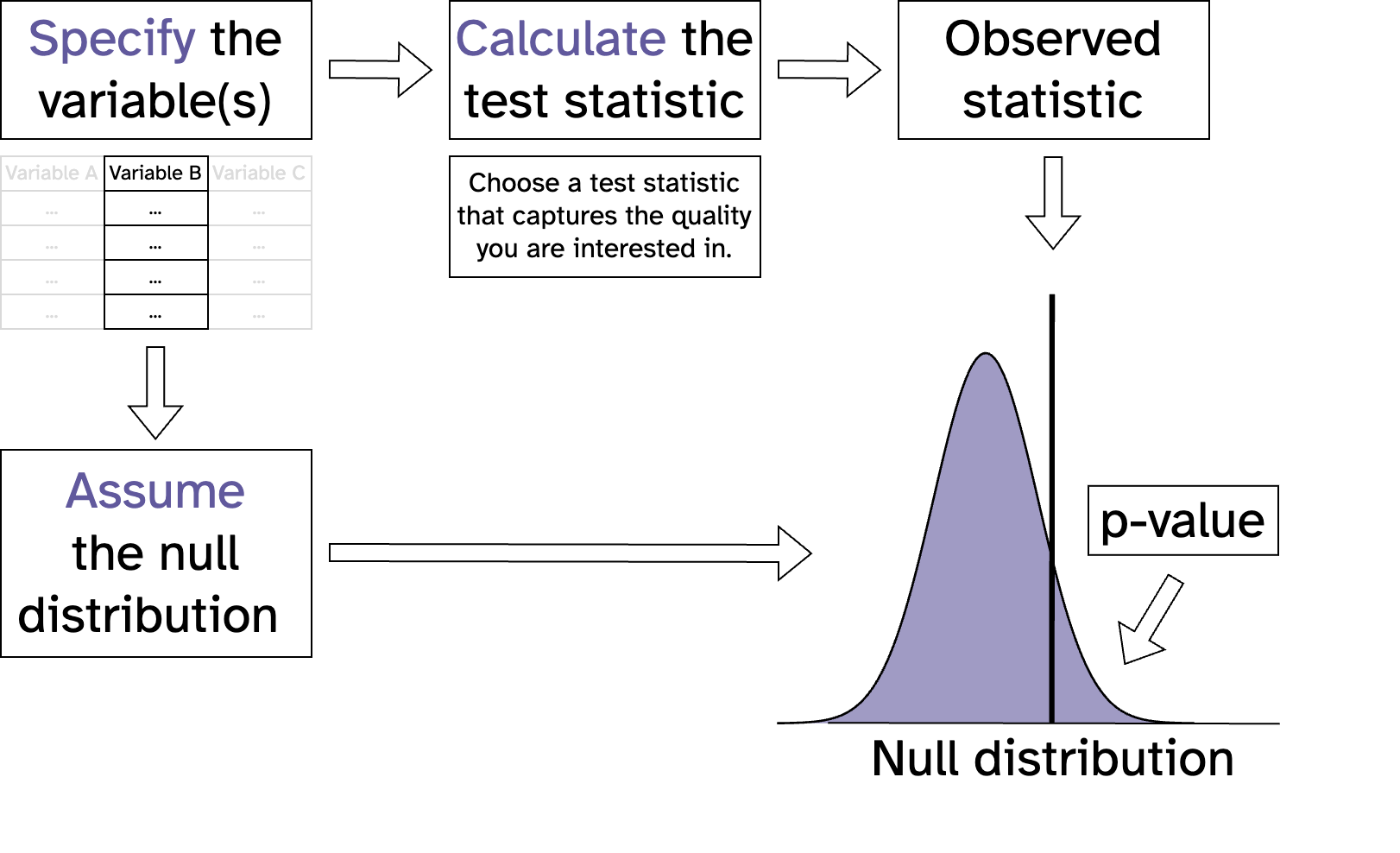

Statistical inference with maths

Statistical inference with maths

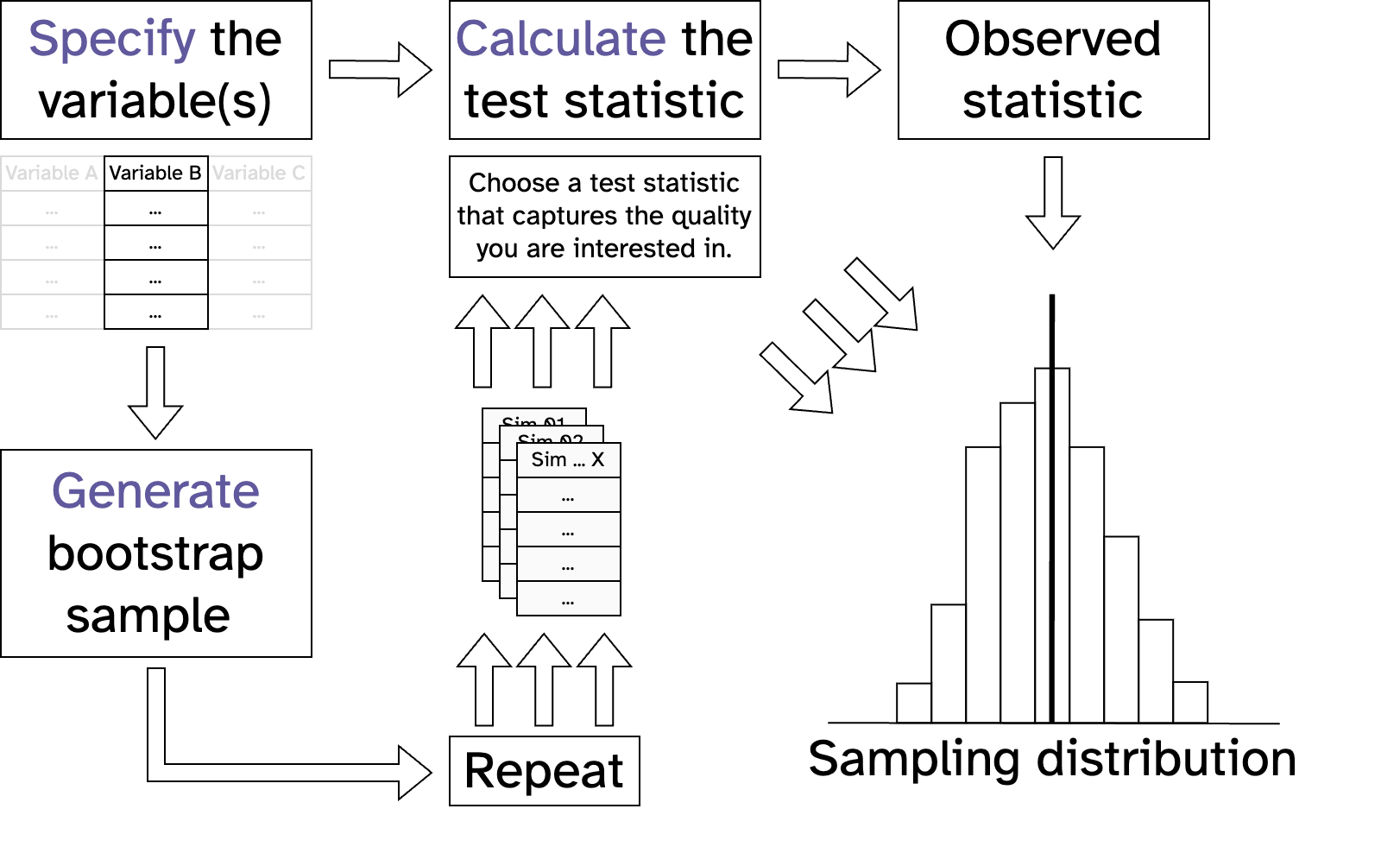

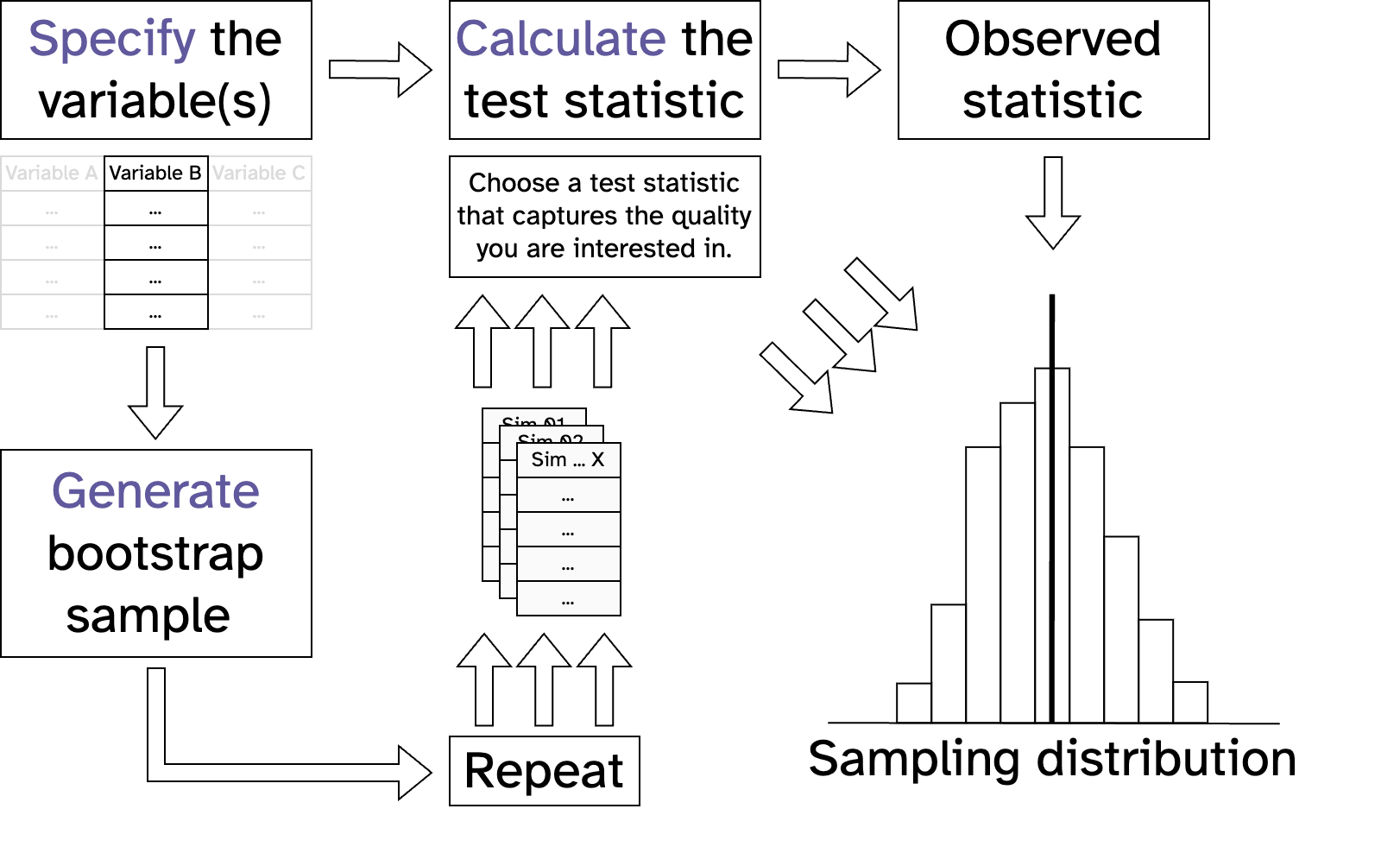

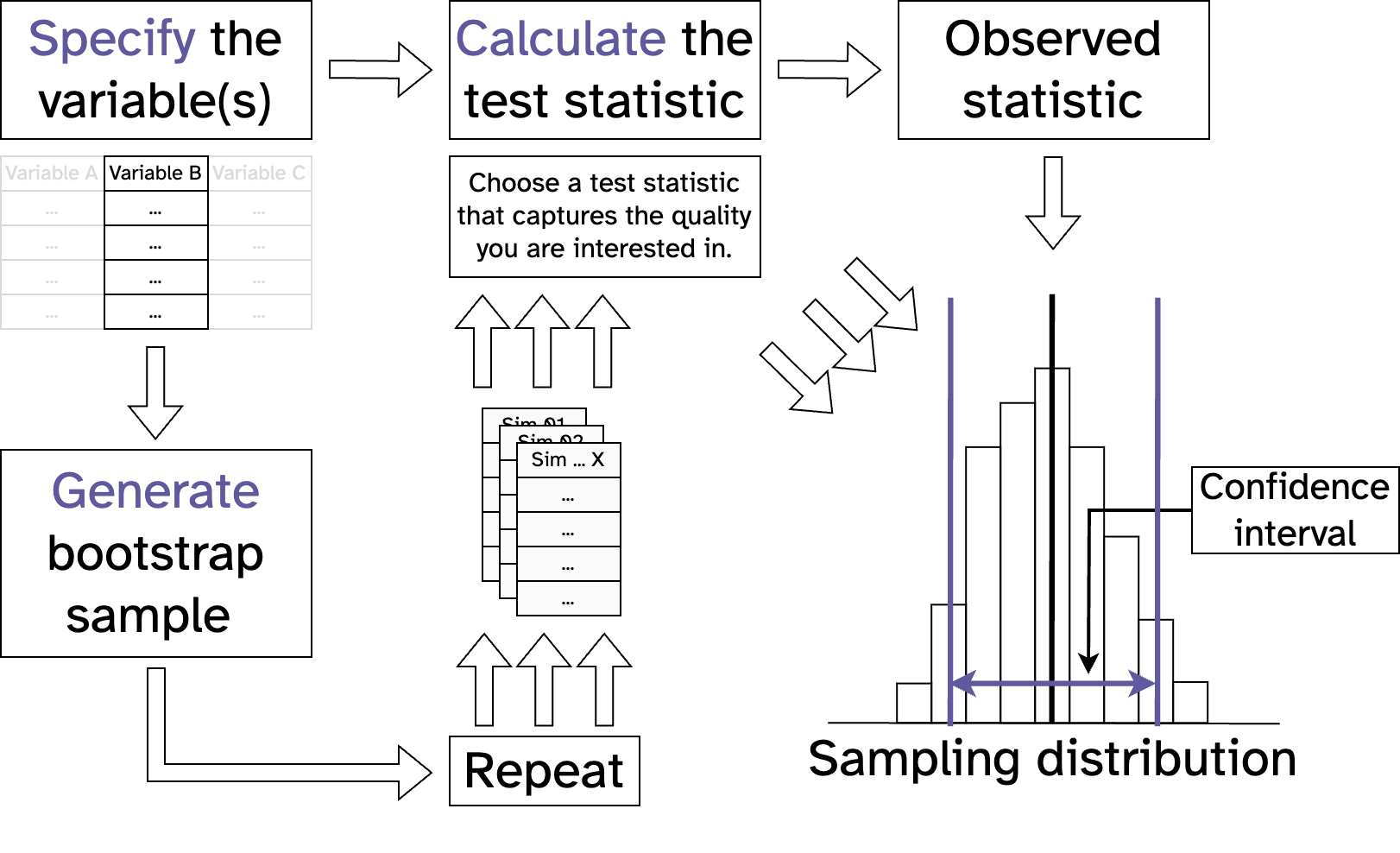

Resampling vs maths

Statistical inference with maths

Resampling vs maths

Statistical inference with maths

Resampling vs maths

- Re-sampling based inference:

- Relies on computational methods such as bootstrapping

- Uses the observed data to generate a sampling distribution

- Often computationally intensive

- Assumptions must be met for valid results*

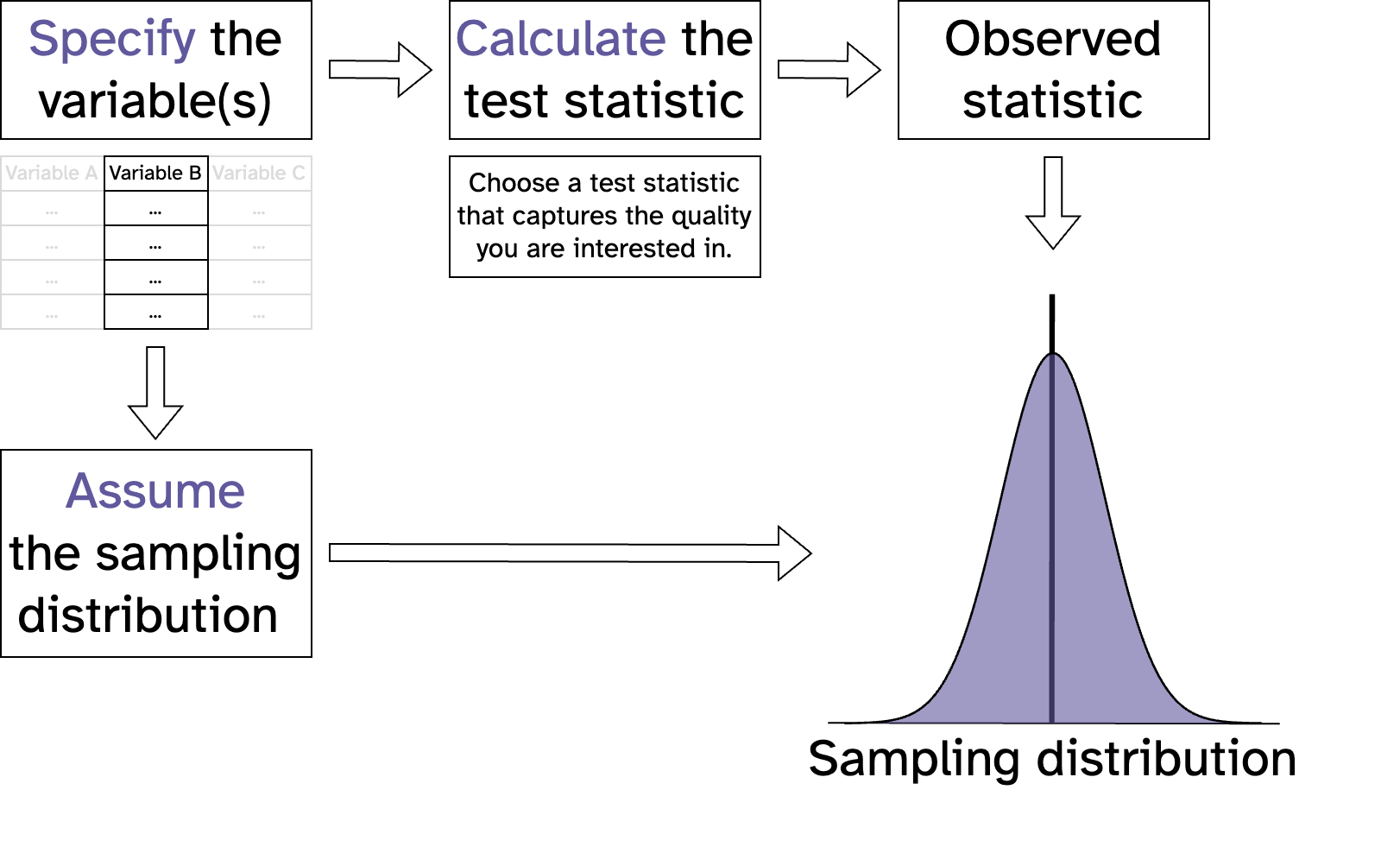

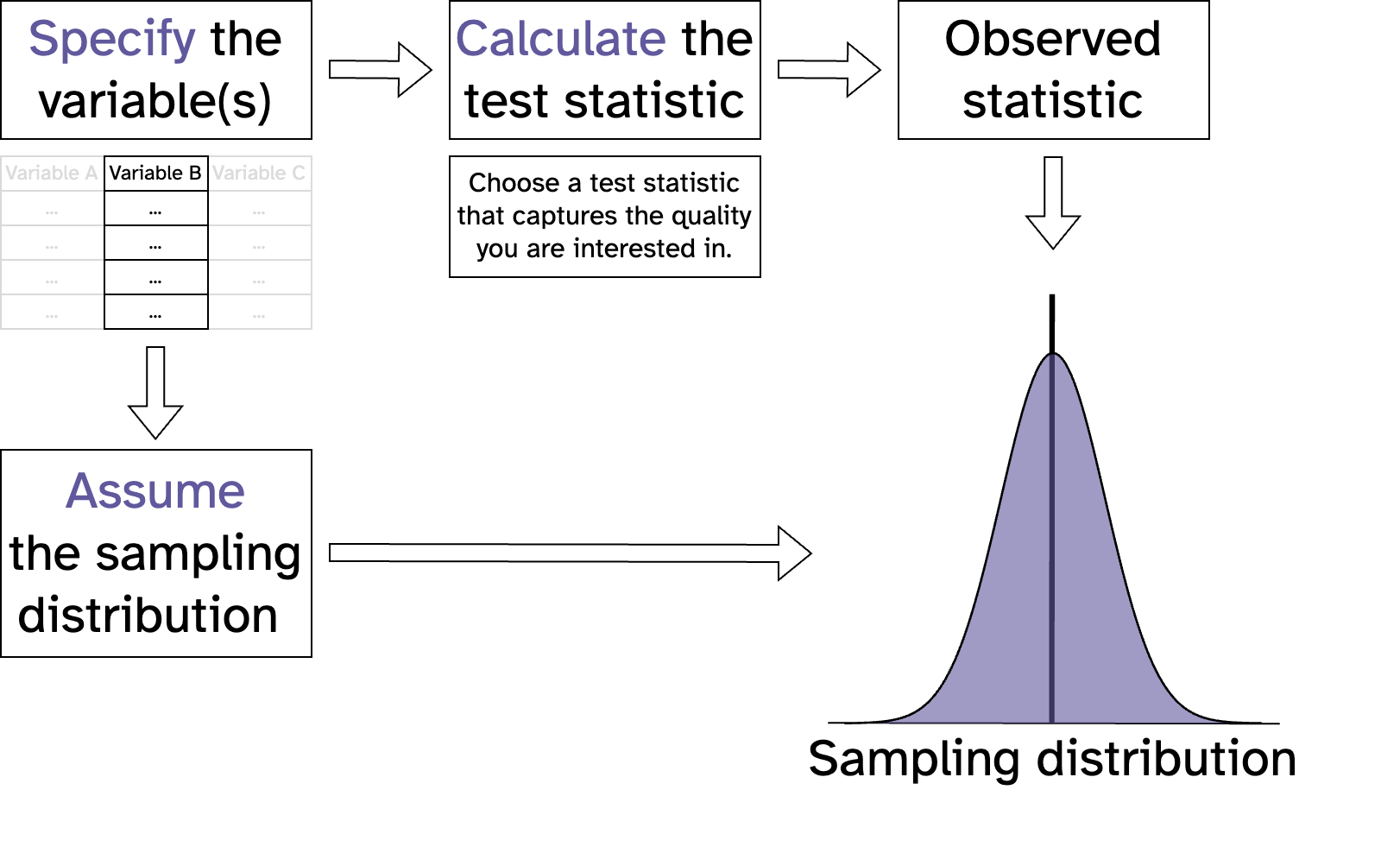

Statistical inference with maths

Resampling vs maths

- Math-based inference:

- Relies on mathematical models

- Uses theoretical distributions to approximate the sampling distribution

- Requires smaller computational effort compared to re-sampling

- Assumptions must be met for valid results*

Statistical inference with maths

Resampling vs maths

- Is a mean different from a point value?

- 1 sample t-test (1 sample sign test)

- Are the means of two independant groups different from each other?

- 2 sample t-test (Mann-Whitney U test)

- Are the means of two paired groups different from each other?

- paired sample t-test (Wilcoxon matched pairs test)

- Are the means of three or more groups different from each other?

- one-way ANOVA (Kruskal-Wallis test)

- Is there a relationship between two categorical variables?

- Chi-square test

- Does a proportion differ from a point value?

- 1 prop sample test

Statistical inference with maths

Resampling vs maths

Statistical inference with maths

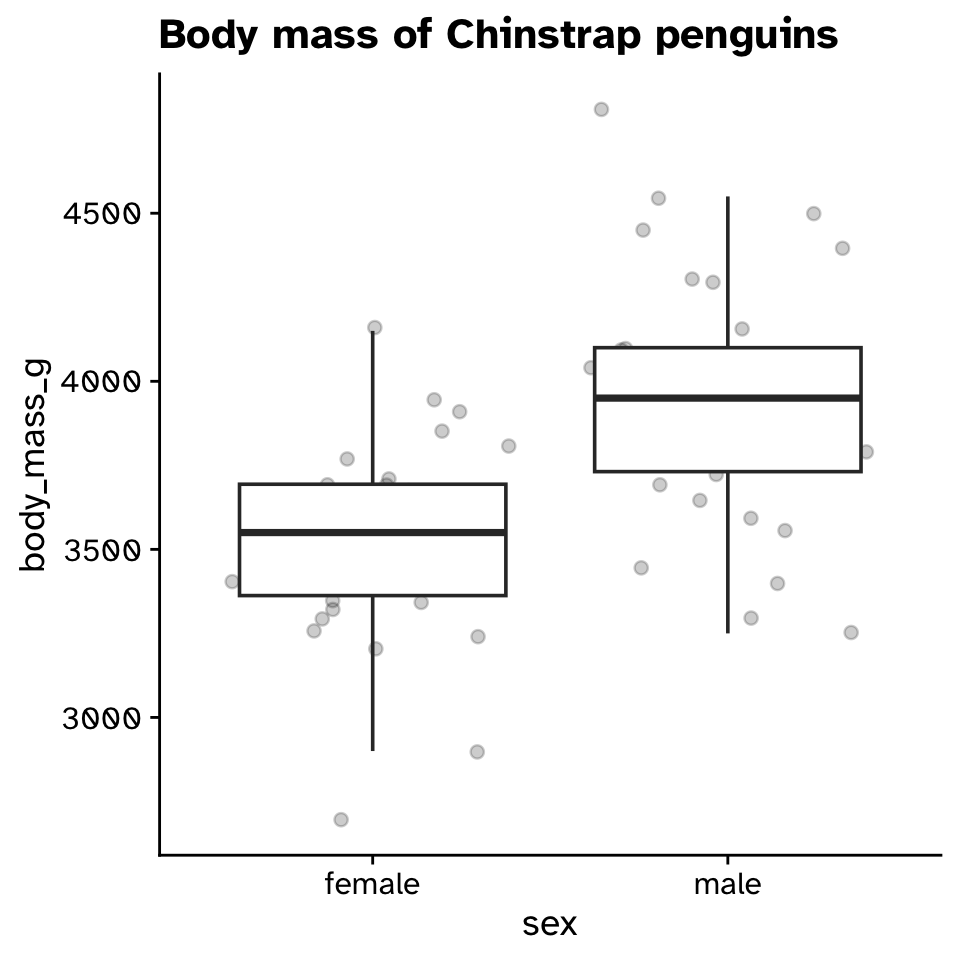

Resampling vs maths (diff in means CI)

- What is the difference in average size of males and females?

Statistical inference with maths

Resampling vs maths (diff in means CI)

Statistical inference with maths

Resampling vs maths (diff in means CI)

Statistical inference with maths

Resampling vs maths (diff in means CI)

Statistical inference with maths

Resampling vs maths (diff in means CI)

Statistical inference with maths

Resampling vs maths (diff in means CI)

Statistical inference with maths

Resampling vs maths (diff in means CI)

Statistical inference with maths

Resampling vs maths (diff in means CI)

Statistical inference with maths

Resampling vs maths (diff in means CI)

Statistical inference with maths

Resampling vs maths (diff in means CI)

Statistical inference with maths

Resampling vs maths (diff in means CI)

Statistical inference with maths

Resampling vs maths (diff in means CI)

Statistical inference with maths

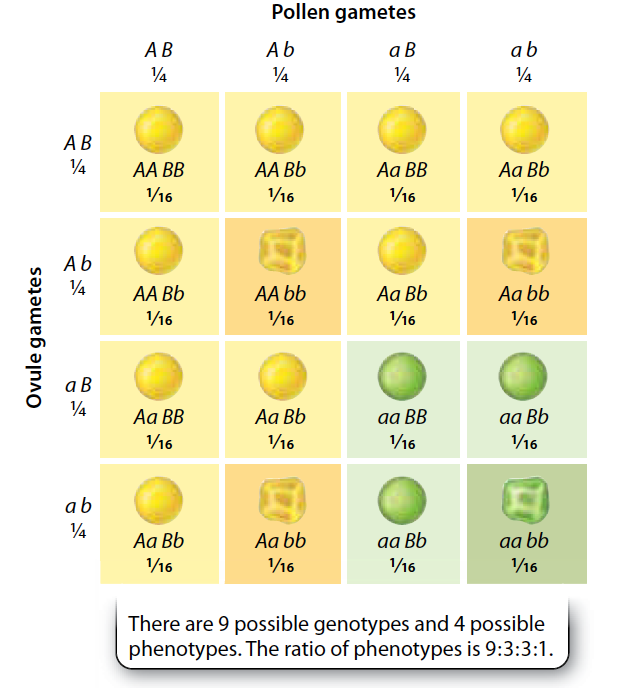

Resampling vs maths (\(\chi^2\) Goodness of fit)

Statistical inference with maths

Resampling vs maths (\(\chi^2\) Goodness of fit)

Statistical inference with maths

Resampling vs maths (\(\chi^2\) Goodness of fit)

Statistical inference with maths

Resampling vs maths (\(\chi^2\) Goodness of fit)

\[ \chi^2 = \sum \frac{(Observed_i - Expected_i)^2}{Expected_i} \]

Statistical inference with maths

Resampling vs maths (\(\chi^2\) Goodness of fit)

Statistical inference with maths

Resampling vs maths (\(\chi^2\) Goodness of fit)

- Null hypothesis:

- The sample came from the hypothesised distribution

- The sample distribution is not different from the hypothesised distribution

- Alternative hypotheis:

- The sample came from a different distribution to the one hypothesised

- The sample distribution is different from the hypothesised distribution

Statistical inference with maths

Resampling vs maths (\(\chi^2\) Goodness of fit)

Statistical inference with maths

Resampling vs maths (\(\chi^2\) Goodness of fit)

Statistical inference with maths

Resampling vs maths (\(\chi^2\) Goodness of fit)

Statistical inference with maths

Resampling vs maths (\(\chi^2\) Goodness of fit)

Statistical inference with maths

Resampling vs maths (\(\chi^2\) Goodness of fit)

Statistical inference with maths

Resampling vs maths: which to use?

- Which ever you understand best

- See maths approach as adding extra information

- If right, very powerful

- If wrong, very wrong

- Sometimes neccesary

- Computational times are no longer an issue

- Theory based statistics developed to get around this

Statistical inference with maths

Resampling vs maths: which to use?

- Your sample is representative of the population you want to make inferences about

- Collecting more data is always going to lead to more accurate inferences

- Neither are magic

- Garbage in, garbage out

- Vunerable to “p-hacking” and other unethical uses